Anthropic Removes Extra Fees for Large Context Windows in New AI Models

Changes to pricing for Opus 4.6 and Sonnet 4.6 may improve access to large-scale language model capabilities for developers and businesses.

Introduction

Recent developments in artificial intelligence continue to reshape how developers and organizations build digital tools. One update attracting attention in the technology community comes from Anthropic, an AI research company known for developing advanced language models.

According to reporting from The Decoder, Anthropic has removed additional charges previously associated with very large context windows in its AI models. This change affects two of the company’s prominent systems: Claude Opus 4.6 and Claude Sonnet 4.6.

The decision could make advanced AI capabilities more accessible to developers who rely on large context windows for complex tasks. By reducing costs associated with processing long documents and large datasets, the update may influence how AI tools are used in research, programming, and data analysis.

Understanding Context Windows in AI Models

To understand the significance of this update, it is important to know what a context window is. In language models, the context window refers to the amount of text the model can process and remember at one time.

A larger context window allows an AI system to analyze longer documents, maintain more conversation history, and handle complex prompts. For example, a developer may ask an AI model to review a long technical report or analyze a large codebase.

When the context window is limited, the system can only process a smaller portion of information at once. This may require breaking large tasks into smaller parts, which can slow down workflows.

Large context windows therefore provide important advantages for users working with extensive text or data.

The One-Million-Token Capability

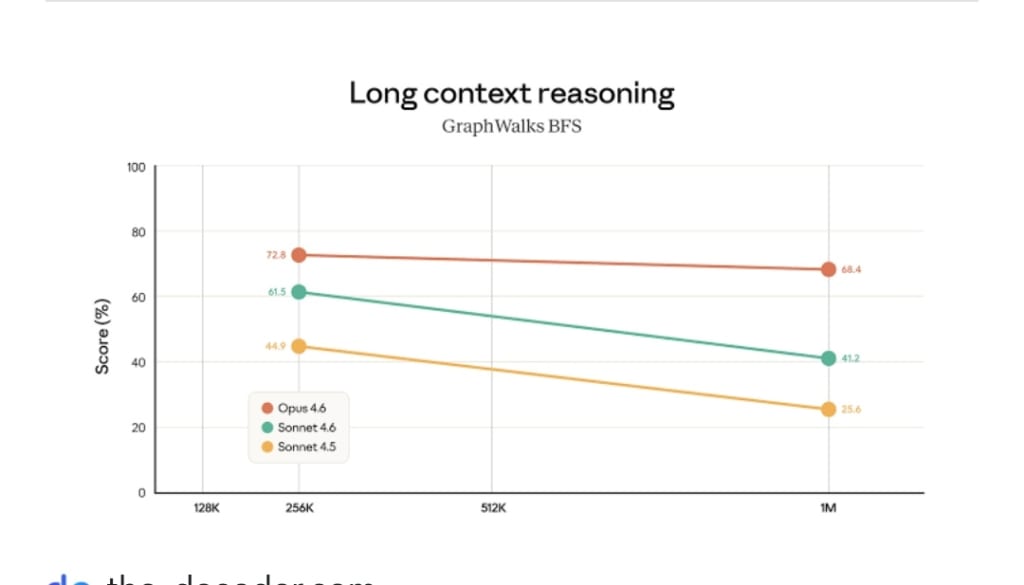

Some modern AI models are capable of processing extremely large inputs. Anthropic has developed systems that support context windows of up to one million tokens.

A token is a unit used by language models to represent pieces of text. Tokens may correspond to individual words, characters, or parts of words depending on the model’s internal structure.

When a model supports one million tokens, it means that the system can analyze very large documents or extended conversations in a single request. This feature can be useful for researchers, software developers, and analysts who need to examine complex information.

Previously, using such large context windows often involved additional pricing charges.

Previous Pricing Structure

Before the recent update, many AI platforms applied extra costs when developers used the largest context windows. These charges reflected the additional computing resources required to process large amounts of text.

Processing longer inputs demands more memory, more computational power, and longer processing time. Because of these technical requirements, companies often added surcharges for very large prompts.

While this pricing model helped cover infrastructure costs, it also created financial barriers for smaller organizations or independent developers who wanted to experiment with large-scale AI capabilities.

Anthropic’s decision to remove these surcharges represents a notable shift in pricing strategy.

Changes Introduced by Anthropic

The new policy removes the extra fee associated with million-token context windows for the company’s latest models. As a result, developers can use the full context capacity of Claude Opus 4.6 and Claude Sonnet 4.6 without paying additional charges.

This change effectively lowers the cost of processing large documents or datasets. Developers can now run complex AI tasks that involve extensive input without worrying about separate pricing tiers for context length.

Technology analysts say the decision may encourage more experimentation and wider adoption of large-context AI models.

Benefits for Developers

Developers are among the primary beneficiaries of this update. Many software projects involve analyzing long pieces of information such as technical documentation, research reports, or large code repositories.

With access to larger context windows at lower cost, developers can design tools that handle complex workflows more efficiently. For example, an AI assistant might review an entire project folder rather than individual files.

This capability can simplify tasks such as debugging software, summarizing long documents, or generating insights from large datasets.

Developers may also find it easier to build applications that rely on extended conversation history.

Impact on Businesses

Businesses increasingly rely on artificial intelligence to improve productivity and decision-making. Large language models are used in customer support systems, research analysis, and knowledge management platforms.

The ability to process large volumes of information in a single request can reduce the need for multiple steps in AI workflows. For example, a company could analyze a full contract or report without dividing it into smaller segments.

Lower pricing for large context windows may therefore encourage companies to expand their use of AI tools across departments.

Organizations working in fields such as law, finance, and research may find particular value in this capability.

Competition in the AI Industry

The artificial intelligence industry has become highly competitive. Several companies are developing large language models with advanced capabilities.

Pricing decisions can play an important role in attracting developers and businesses. By reducing the cost of large context windows, Anthropic may strengthen its position in the market.

Technology companies often adjust pricing structures to encourage adoption of new products or features. Lower costs can help developers experiment with new technologies and build applications that rely on advanced AI capabilities.

Observers note that similar strategies have been used by other technology companies to expand their user base.

Research and Education Opportunities

The update may also benefit researchers and educational institutions. Universities and research groups often analyze large datasets, long research papers, and technical archives.

Access to large context windows at lower cost could help researchers explore new methods for analyzing information. For example, an AI system might examine a full collection of academic papers to identify patterns or summarize findings.

Students learning about artificial intelligence may also benefit from easier access to powerful tools. Educational projects often require experimentation with large data inputs.

Lower pricing could therefore encourage more innovation in academic environments.

Technical Considerations

While removing surcharges makes large context windows more accessible, the technical challenges of processing large inputs remain. Running AI models with million-token capacity requires powerful infrastructure.

Cloud computing resources, specialized processors, and efficient data management systems all play roles in supporting such operations.

Anthropic and similar companies invest heavily in research and engineering to maintain reliable performance at these scales.

Developers using these capabilities must also design applications carefully to ensure that large inputs are processed effectively.

Future Developments in AI Models

The evolution of context windows is part of a broader trend in artificial intelligence development. Researchers continue to explore ways to increase the amount of information models can process while maintaining accuracy and efficiency.

Future models may support even larger context windows or improved methods for retrieving information from long documents.

Advances in hardware and optimization techniques could also reduce the computational cost of processing large inputs.

These developments may expand the range of tasks that AI systems can perform in fields such as research, software development, and data analysis.

Conclusion

The decision by Anthropic to remove surcharges for million-token context windows represents an important development in the AI industry. By lowering the cost of using large context capabilities in Claude Opus 4.6 and Claude Sonnet 4.6, the company is making advanced tools more accessible to developers, businesses, and researchers.

As reported by The Decoder, this change could encourage wider experimentation with large-scale language models and support new applications that require extensive text analysis.

Large context windows allow AI systems to process complex information more efficiently, which can improve productivity in many professional fields.

As artificial intelligence technology continues to evolve, pricing decisions and technical innovations will shape how organizations adopt and use these tools in the future.

About the Creator

Saad

I’m Saad. I’m a passionate writer who loves exploring trending news topics, sharing insights, and keeping readers updated on what’s happening around the world.

Comments

There are no comments for this story

Be the first to respond and start the conversation.